Making architectural decisions is usually the most nerve-wracking part of our job. Outcomes are hard to predict and sometimes there seem to be way too many avenues open to us. Let's look at one such problem and a tool we can use to arrive at a plan.

A cautionary tale?

A common problem in building a site is deciding what compromise to make between flexibility and consistency. A simple WYSIWYG text field on a page can deliver a huge amount of flexibility, at the cost of content that is hard to repurpose and a presentation that can vary wildly from page to page. In contrast, a structured, rigid set of fields can be designed to be presented beautifully and is often very easy to use, but does not allow for content that deviates from this norm.

A compromise between the two approaches is to provide a set of structured "building blocks" that can be assembled in any order to produce complex results. This will sound familiar to many readers because it describes the popular Drupal module Paragraphs. Paragraphs has become a standard tool in our arsenal when building new sites.

In 2014 we architected a project to build landing pages for a site which required exactly this "building block" approach. Paragraphs would be a great fit for this! But Paragraphs was less than a year old at the time, and the project called for content that could interact with many other systems: group membership and content access, moderation, translation, and so forth. There were quirks with these interactions that meant this approach was not viable out of the box.

So a fork in the road presented itself. Do we try to change Paragraphs to fit our needs? Do we develop something new for the site? We did end up doing some custom development for a node-based approach to the problem, and were filled with regret a year or two later when Paragraphs ended up achieving domination and addressing most of the concerns we had at the time.

In the end I'm not sure we can know whether we picked the correct approach—especially given the limited information we had at our disposal—but we can think about the decision process for when similar choices appear in the future.

Let's do some fake math

One way to frame this kind of analysis is a kind of "napkin algebra." We can identify various factors at play and how they interrelate, and use this to shine a light on pros and cons of different solutions.

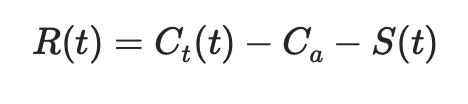

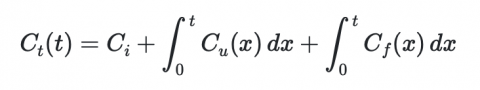

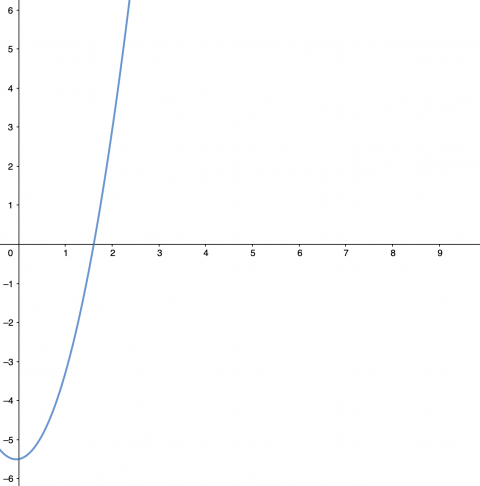

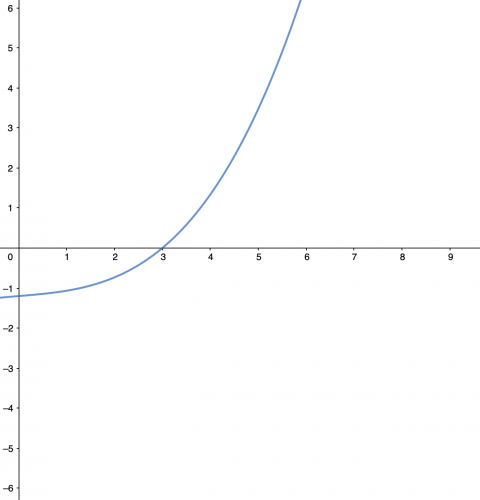

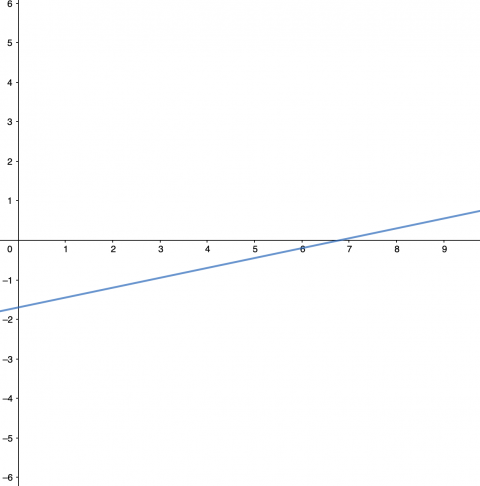

In this case, we are really trying to minimize the regret we have about our decision. It is likely that if a decision is hard enough, there will always come a time where we wish we had chosen differently, if even for a moment. We can measure this regret R(t) as the cost Ct(t) of the product over its lifetime, adjusted by the anticipated cost Ca and the success S(t) of the result.